llama3

-

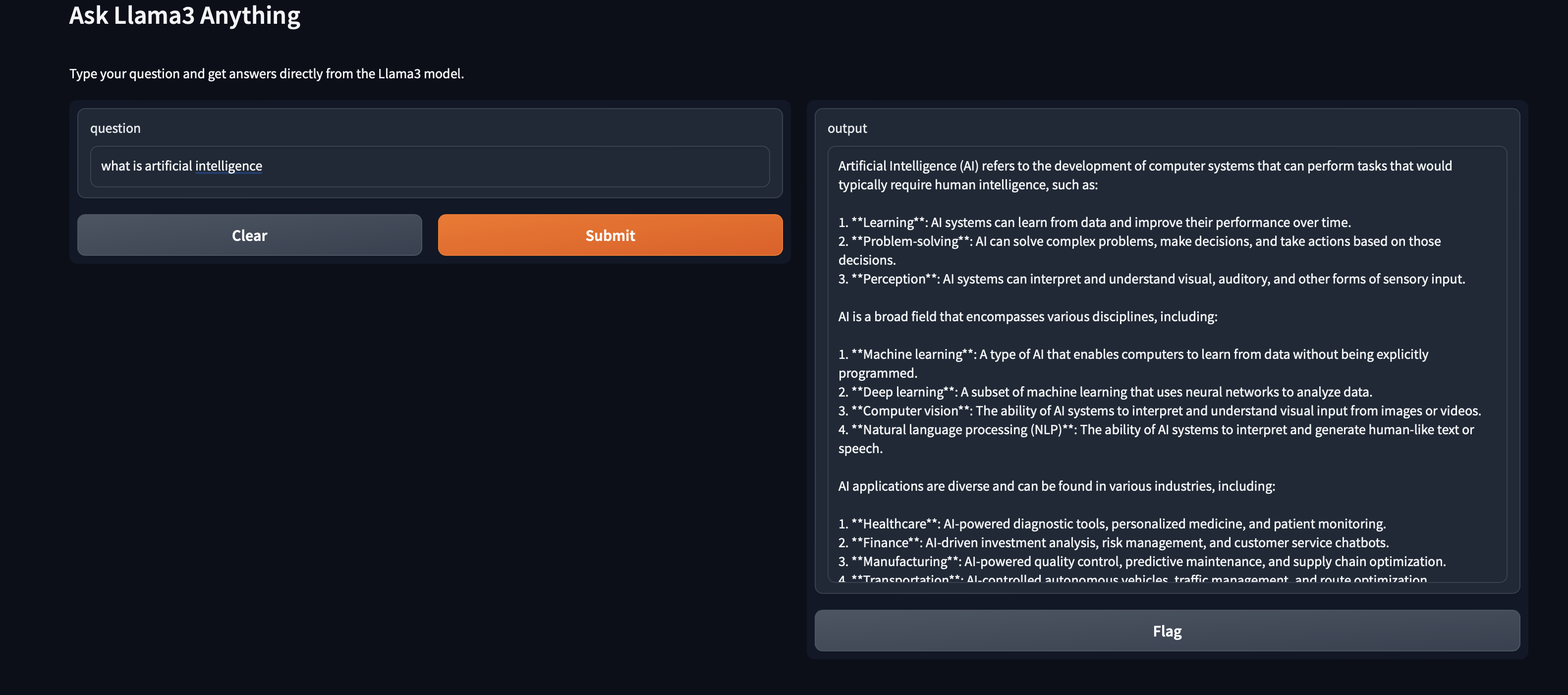

Run Deepseek-r1 and Phi4 Locally with Gradio and Ollama

Run DeepSeek-R1 and Phi4 locally with a Gradio web interface and Ollama. Full privacy, zero cost, and complete control over your AI models. Continue reading

Recent Posts

- How LLM Tool Calling Actually Works: A peek under the hood

- Run Gemma 4 on Raspberry Pi 4B: Complete Free Local AI Setup Guide

- Setup a Simple Web Page Watcher with Phone Notification on Raspberry Pi

- How to Make and Receive SIP to PSTN Calls Using Twilio and Zoiper (Step-by-Step Setup Guide)

- WebGL 3D: An interactive 3D cube (fun experiment)